IP 17: Building A Typology Of AI Regulation

I hope American readers are having an enjoyable Labor Day. Traditionally, of course, it’s the holiday that heralded the start of political campaigning season. That was before political campaigns basically started on the day after the last election was over with.

Thanks for the feedback on the most recent Substack on the risk of a regulatory Splinternet, driven by AI. This week we delve more deeply into the various models of AI regulation that are developing and what is motivating them.

I’d love to hear your thoughts on this. Please drop me a line david@artemonstrategy.com and check out the website at www.artemonstrategy.com

If you like this newsletter, do please subscribe and share. And please click the ♡symbol at the top of the post - Substack’s algorithms love the like symbol.

If you’re a free subscriber, please do think about upgrading to become paid - one month is about the price of a pint of beer in London!

A Typology Of AI Regulation

Regulators, international bodies and tech companies are continuing to grapple with how AI can, and should, be regulated. The British government has announced that the first global AI Safety Summit will be held at the iconic Bletchley Park in early November. The summit will involve “like-minded countries”, academics and tech leaders.

Runway Strategies have published this super helpful tracker setting out different stages of regulation around the world. Kent Walker, the global President of Google, gave a fascinating interview to Politico on the issue last week.

The latest report from the House of Commons Science, Innovation and Technology Committee also represents a worthwhile read, as it sets out the 12 challenges that regulators need to grapple with around AI.

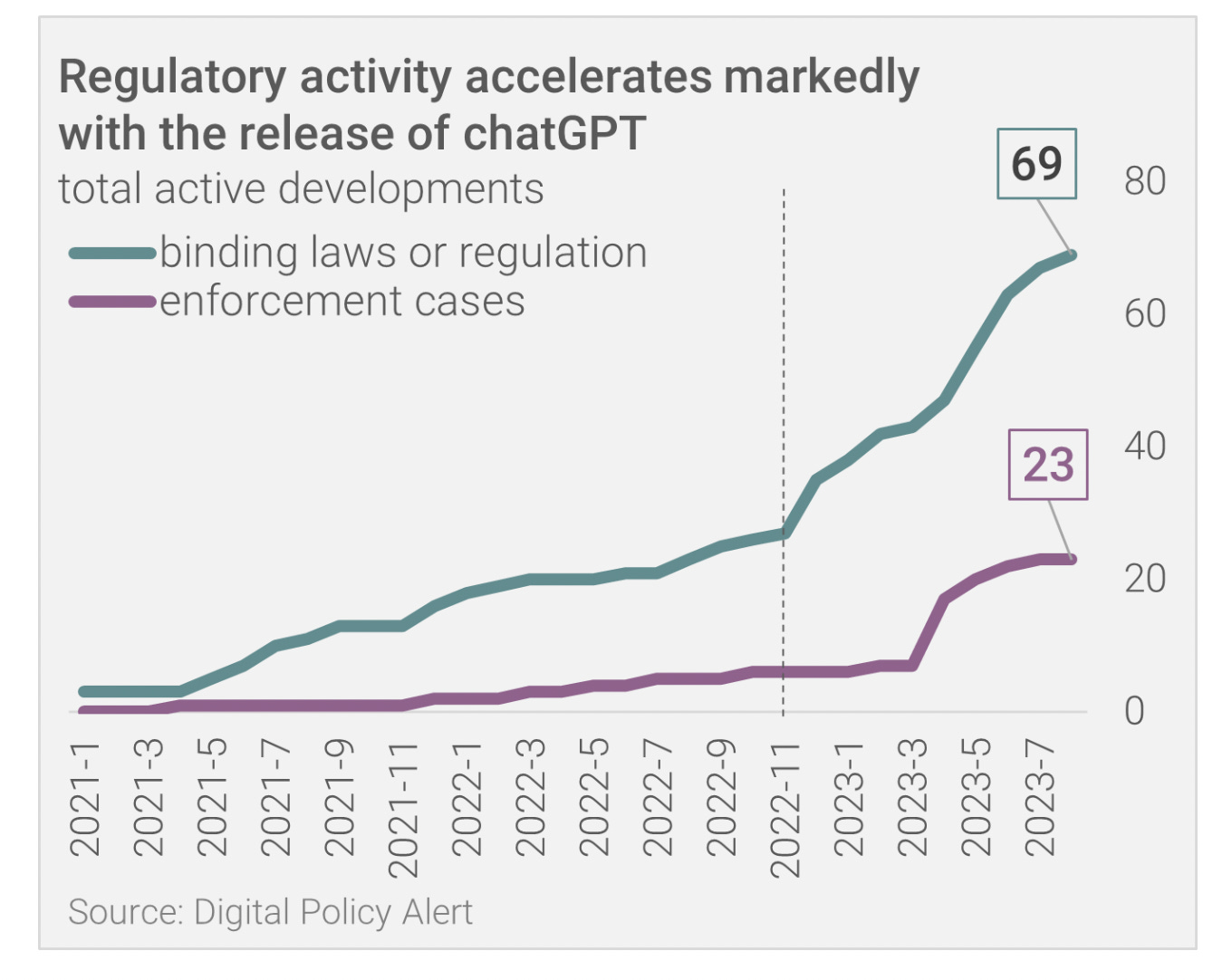

The chart below from Digital Policy Alert shows the big increase in pieces of regulation and enforcement activities since the release of ChatGPT:

Rather than simply diving into the embryonic regulations, it’s important to consider the varying motivations in the development of AI regulation, before considering how the design of AI regulation might look in practice (and is beginning to look like in practice).

Why business should care about the shape of regulation

The nature of AI regulation will shape the impact that AI can have on the economy; how businesses choose to use AI; and what the overall innovation environment looks like. Different regulatory regimes, based on profoundly different principles, might well dictate different levels of benefit from AI and different innovation investment environments. An understanding at this relatively early stage of the varying models of AI regulation is important for businesses to understand how to navigate the emerging regulatory environment and maximise the benefits of AI without running into regulatory risk and confusion.

What do governments see as the purpose of AI Regulation?

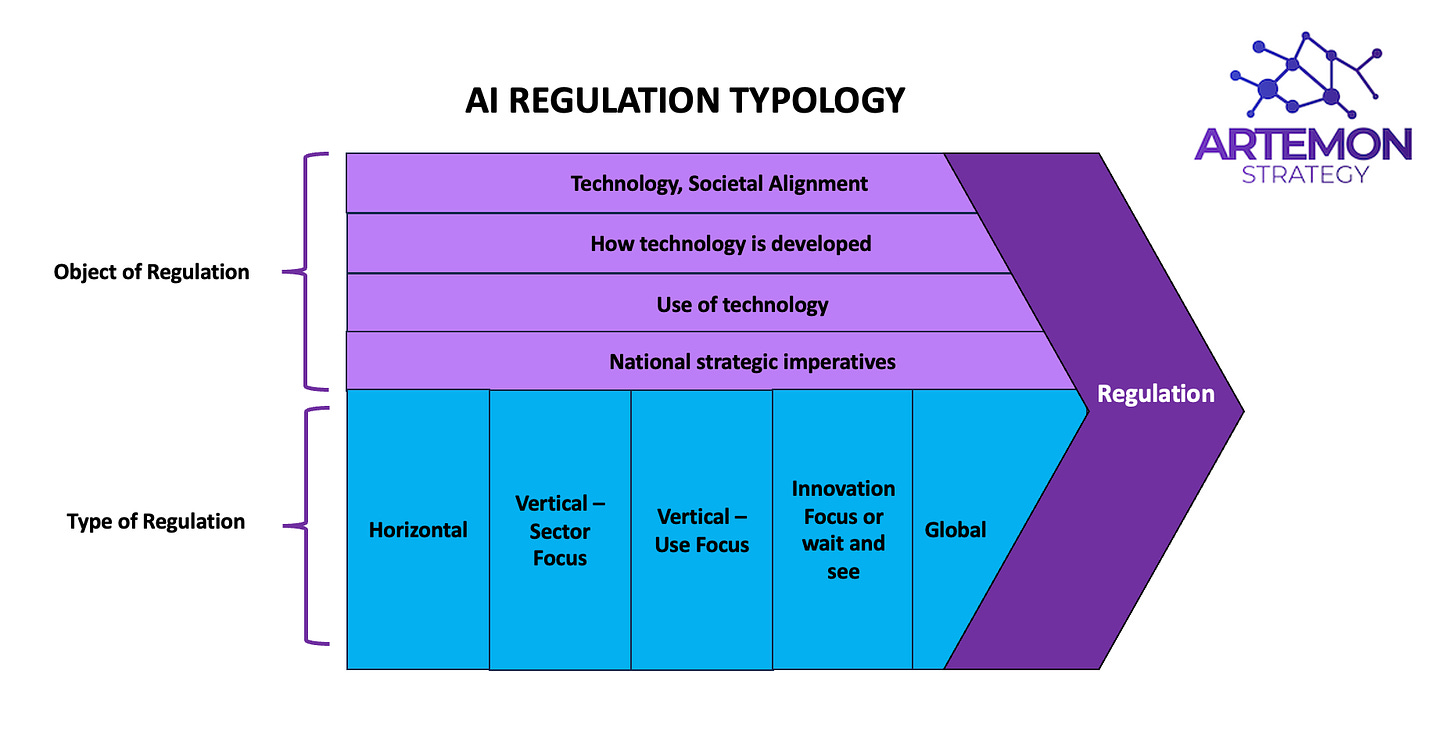

When developing a typology of AI regulation, two main elements need to be considered:

What is a government or regulator looking to achieve from regulation? What is the regulator’s purpose from regulation and which element of the technology is it looking to regulate?

What is the mechanism by which a regulator is choosing to pursue regulation? Is this based on the technology itself or what the technology is seeking to do?

There are, at least, four different regulatory purposes for AI regulation:

Regulating the technology itself

A number of approaches to AI regulation are based on the belief that the technology itself, rather than its use, should be regulated. Part of this impulse is a response to the perception that governments responded too late to the development of the internet or the growth of social media. And part is a simple understanding that AI will have a profound impact on society and might present more dystopian risks, so there do need to be some regulatory guardrails. As such, the purpose of broad legislation will be simply to regulate AI as a whole, without being more specific about the exact purpose of the legislation. Such legislation could well differ based on type of AI, with open-source systems potentially being bound by more regulatory strictures. This element might also include the environmental impact of the greater resource needs of AI systems and data centres.

Regulating for alignment with societal principles

The broadest purpose of AI regulation is that regulation must be pursued in order to align AI with “societal values”. This could range from the Chinese Communist Party saying that LLMs must follow the “core values of socialism” to democratic governments looking to ensure that LLMs abide by societal values, such as fairness, equality or even democracy itself.

Regulating how the technology is developed

Regulators will increasingly be concerned with several elements of how Large Language Models are developed, including:

Sources of training data for LLMs. Particularly whether training data uses copyrighted material or material that might be seen as violating user privacy.

How LLMs are developed and the potential sharing of testing material, training evaluation or consideration of risks and mitigations with regulators or other bodies.

What steps AI labs and others are taking to address known issues, such as “hallucinations”.

Regulating how the technology is used

How AI is used by businesses is, in many ways, already the most mature form of AI regulation. The use of AI in, say, financial decisions, has been the object of regulatory scrutiny for some time. This regulatory field is only likely to grow in coming years, with AI being more broadly involved, even at a relatively early stage, in business decisions, recruitment screening, medical matters and back office processes. More alarmingly, we already know that AI could potentially be used for disinformation, deepfakes, mimicry and other societal ills by bad actors.

Different models of regulation

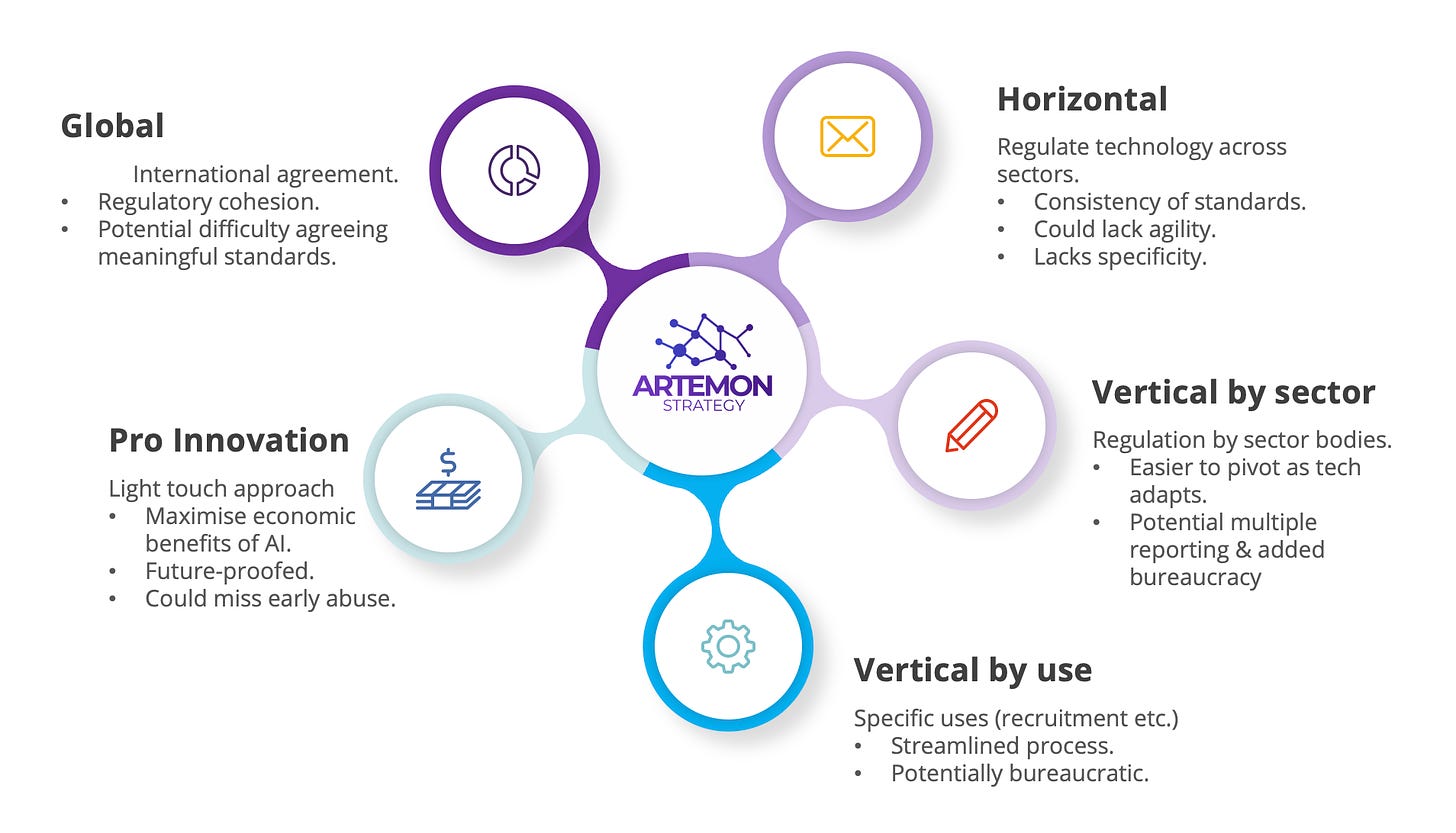

Even at this relatively embryonic stage of AI regulation (although the EU first proposed the AI Act in 2021), there are clear patterns emerging. Initially this can be divided into five main models:

An important proviso is, of course, that many countries will adopt a mixture of all of these models, with few adopting a “pure model” approach to regulation. Changes of government could see a radical pivot in approaches to regulation, particularly as approach to AI becomes an increasingly central political issue. Even with this proviso, considering a typology and a model of regulation allows business and other organisation to navigate the uncertainty of AI regulation in a more systematic way.

Horizontal and risk based

The basis of horizontal legislation is that the technology itself needs to be regulated, across all sectors and at all levels. The European Union’s AI Act remains the best example of this kind of horizontal legislation - all sectors are subject to risk-assesment, with some uses (such as surveillance) banned entirely; low-risk sectors having transparency expectations and high-risk applications requiring much more stringent enforcement.

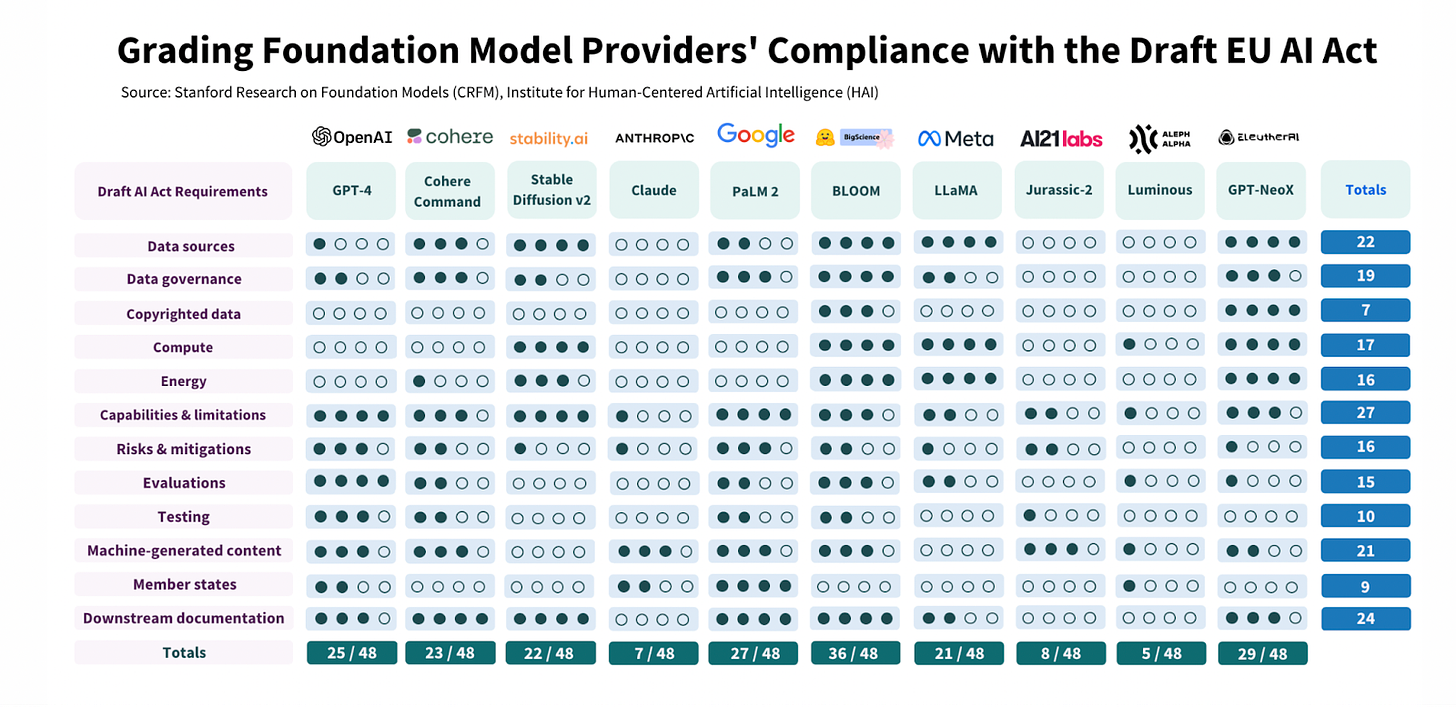

The challenges of horizontal legislation can be considerable. A rigid approach that regulates across sectors and at each stage in the development of tech can both slow innovation and accelerate potential splintering of technologies across geographies. The diagram below, from an excellent Stanford report shows that, across the piece, foundation models would struggle to comply with all of the elements of the draft AI Act.

For many horizontal regulatory approaches, regulating itself seems to be a much greater priority than innovation, leading to potential reduction in the incentive to innovate and important economic impacts. It remains open to question whether a horizontal approach is a sensible way to regulate a technology that will be used in a very different way across sectors and will continue to advance, and alter, rapidly. Horizontal regulation can combine a lack of specificity because of broad application (which can increase uncertainty for business), with a lack of flexibility to regulate a technology that is likely to adapt and change quickly over a relatively short period of time.

The horizontal regulation of AI will almost certainly apply most fully to AI labs developing future models that move closer to AGI. The kind of licensing agreement that industry leaders, such as Sam Altman, have called for would fall within this category.

What would horizontal legislation mean for business? Given the importance of the European market, all global companies are likely to face some elements of horizontal legislation. As we noted in IP 13, all companies are now tech companies and will need to consider the regulatory risk weighting of AI use and consider the compliance needs when developing AI strategies.

Vertical - Sector Based

The approach initially taken by British and other regulators was that existing regulation was largely sufficient to cover this stage of AI. The UK, for example, is proposing a “contextual, sector-based regulatory framework”, based largely on the existing network of regulators. So the use of AI in financial services would be regulated by the equivalent of the Financial Conduct Authority, for example, with additional engagement around privacy from the national Data Protection Authority.

As such, individual sector regulators would lead on the regulation itself, based on how the technology is being used now. This provides for a level of flexibility, agility and sector understanding that horizontal legislation can miss.

However, to have the agility that sector-based regulation could have, regulators will need to be sufficiently tech savvy and regulations will need to be future-proofed in a way to make pivoting easier. Some have also expressed concerns that sectoral legislation could, if done badly, lead to multiple reporting requirements, increased bureaucracy on business and a lack of standardisation for both businesses and consumers.

What would vertical sector based legislation mean for business? Continuing to build relationships with sectoral regulators will remain crucial, as will maintaining an emerging understanding of how various cross-regulatory interactions will impact on your regulatory environment. It will also be crucial to use scenario analysis to consider how the regulatory environment might shift.

Vertical - Use Based

Many American states are already introducing specifically use based vertical legislation, which is specific to individual ways in which the technology is used. Such bespoke regulation enables legislation to apply to specific applications. For example, New York City’s Automated Employment Decision Tool law requires employers who use AI in employment decisions to conduct a bias audit before using the systems, have a bias audit undertaken by a third party on an annual basis and inform applicants that such techniques are being used. Similar legislation is being at least considered in other US states, whilst others are pushing forward with legislation to mandate the use of AI in political communications.

What would vertical use-based legislation mean for business? The introduction of use-based legislation both in a purely vertical way and also as a part of horizontal legislation such as the EU AI Act seems certain to become increasingly important as governments seek to effectively regulate various applications of AI. Companies should consider how various uses of AI might get drawn into use-based legislation and plan accordingly as part of an AI strategy.

Innovation Focus or Wait and See

Taking a light-touch approach to the initial phases of AI regulation is seen by some countries as a potential route to gaining early AI leadership and to help maximise the economic benefits of AI, whilst not stifling innovation with unnecessary bureaucracy. The South Korean approach might well be the closest to such a pro-innovation regulatory approach, including a number of “regulation free zones” and deregulation aimed explicitly at encouraging experimentation in AI. A light-touch approach might have limited “guardrails” against overt abuse, but its proponents regard now as being too early to take a definitive view on how emerging (and rapidly changing) technology can be developed in a way that is agile enough to keep pace with the technology. The “red flag” laws of the late 19th Century in the UK and the US are important historical examples of regulation that is rapidly outpaced by technological adoption. These laws dictated that:

“While any locomotive is in motion, shall precede such locomotive on foot by not less than sixty yards, and shall carry a red flag constantly displayed, and shall warn the riders and drivers of horses of the approach of such locomotives, and shall signal the driver thereof when it shall be necessary to stop, and shall assist horses, and carriages drawn by horses, passing the same.”

Red flag traffic laws are an important reminder of the danger of regulating too early and being rapidly outstripped by emerging technology. Some might be adopting a “wait and see” approach - waiting for further development of technology and use cases before making firmer decisions on a regulatory framework.

What would innovation-focused legislation mean for business? If some countries decide to pursue a regulatory regime with a focus on innovation, this could have a substantial impact on investment decisions and the countries that ultimately become AI leaders. For global companies, being aware of those countries being most innovation friendly might be a useful approach to future-facing AI strategies.

Global agreements

Reaching a global agreement on “guardrails” and licensing for AI development has been the clearly declared aim of a number of G7 summits, proposed by both White House and Downing Street and backed by a number of industry leaders, such as Sundar Pichai and Sam Altman. The development of a CERN or IAEA equivalent for AI has been much discussed and it seems likely that one of the goals of the the Bletchley Park AI Summit will be to establish the broad principles behind such a body, with the Frontier Model Forum and the OECD AI Principles potentially forming the basis for this. Ian Bremmer and Mustafa Suleyman, the co-founder of Deepmind’, made an interesting case for global Technoprudentialism in Foreign Affairs. This eloquent rebuttal of the article by George Coe is well worth a read too. As George points out, in a world where the US is unable to agree federal privacy legislation isn’t really a world where meaty AI global governance can be easily agreed.

As we’ve argued in a number of Inflection Points, business and political leaders should look to achieve as much regulatory cohesion as possible, or face the prospect of a regulatory splinternet. However, there are substantial hurdles to achieving genuine global agreement. Views even between democracies about the use of AI for facial recognition, for example, can differ markedly. The level of genuine cooperation between China and the US is close to a post WTO accession low and autocracies seem intent on using technology in a way that democracies are increasingly uncomfortable with. Realistically, any global governance is likely to be based on high-level guardrails, at best, with forums like the G7 potentially providing greater potential for meatier regulatory processes.

What global agreements might mean for business? It’s important for cross-border businesses to continue making the case for as much regulatory cohesion as possible. The engagement of business in the development of these agreements is also important.