IP21: Inflection Points In Tech For 2024

Happy New Year to everyone. Thanks so much for your support, thoughts and occasional interrogation throughout 2023.

It’s a time for resolutions. And one of my New Year’s Resolutions is to write these Substacks more regularly. Please hold me to that!

People who become paid subscribers really help and in a spirit of New Year generosity I’m knocking a full 50% off any subscriptions. I’ll also be introducing subscriber only posts in 2024. The link to the discount page is here. Offer closes at the end of January.

And now on to 2024, which could be yet another big year in tech policy. But what will the big inflection points be? I’d imagine that these will all be issues that I’ll be coming back to in more detail as the year goes on.

Inflection Points In Tech 2024

Elections will accelerate discussion about AI regulation

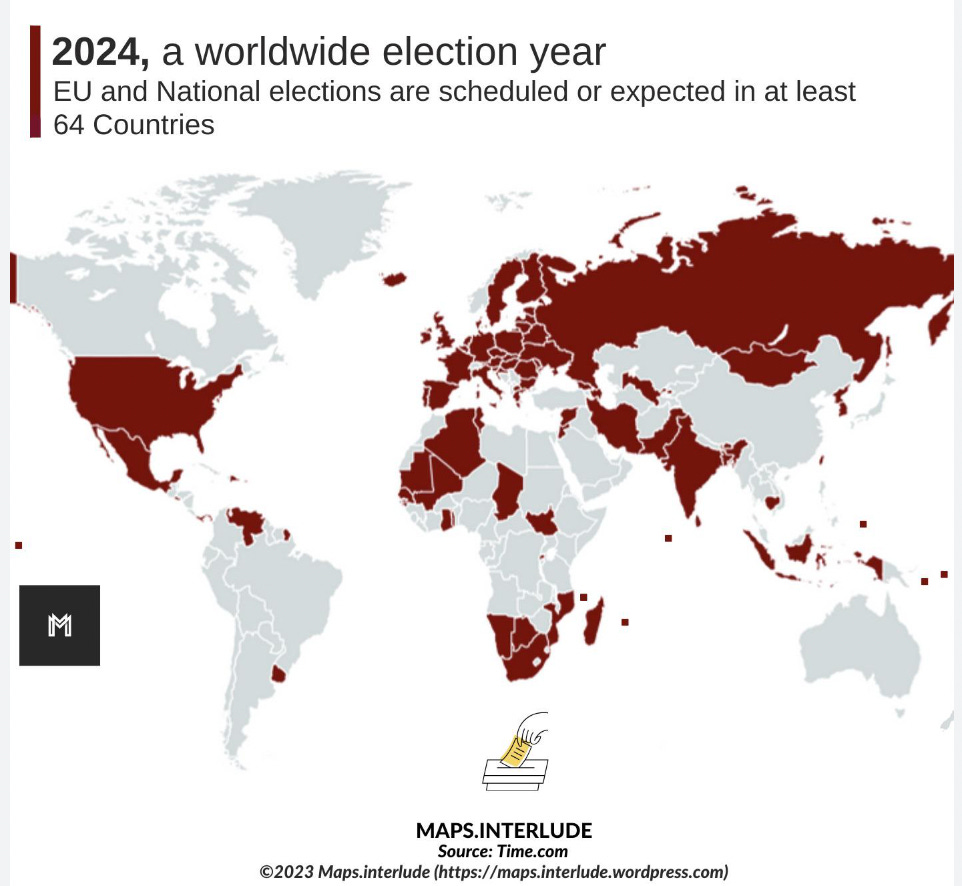

The Taiwanese elections were held yesterday and we know that Chinese produced disinformation has been pumped out as polling day approached. As we covered in IP19, about half the population of the world will be casting their ballots this year, including in the United States, India, the European Union, Indonesia and the UK.

Elections in the past have served to intensify scrutiny of the impact of tech and user generated content on the electoral process. The wave of elections in 2016, for example, led to a considerable acceleration of the techlash and to a whole wave of tech regulation.

The 2024 wave is likely to see a focus on how AI is impacting elections, both through increasingly elaborate deepfakes; the growing ability to mimic and a growth in hyper personalisation of campaigning. AI also gives the tools to create disinformation to more and more people (there’s no need for troll armies now).

How AI impacts this bumper election year could help shape the boundaries for how AI is regulated and whether political parties come to agreements about how it should be used. Equally, there needs to be a wider discussion about how emerging technologies can be used to strengthen and empower democracies.

Sovereign AI will become more important

In early December, my old Google colleague, Pablo Chavez, wrote a fascinating Linked In post about Sovereign AI capabilities being the “second wave of AI”, with sovereign AI defined as “the strategic development and deployment of AI technologies by national governments to protect national sovereignty, security, economic competitiveness, and societal well-being.” The Indian government and the Singaporean government have already made firm proposals and there has been a push for Sovereign AI by the European Union, Australia and the UK (including from Tony Blair).

It is variously argued that such capabilities are necessary for national security reasons or to ensure that economic benefits are maximised. Sovereign AI is a further step in the debate about digital sovereignty that was championed by President Macron and others and the case made for Sovereign AI capabilities by the Indian government also emphasises the importance of independence from American big tech.

The ill-fated Franco-German Quaero search engine is a reminder that government led technology schemes aren’t always a dazzling success. But the Sovereign AI strand of an emerging industrial policy isn’t something that is going to go away. It could mark a further step in the fragmentation and splintering of the global internet, which we have discussed in previous IPs (and do again below), or it could represent a shift towards greater governmental cooperation about design and capabilities. The challenge of Sovereign AI capabilities, like digital sovereignty, also increase the challenges of global companies appearing local in different markets and dealing with local sensitivities.

Fragmentation could continue, possibly driven by decisions around copyright, privacy and data

Inflection Points has touched frequently on the topic of the splinternet and fragmentation of the global internet, including in IP16 and in IP11. Despite the push for broad global standards, the success of the Bletchley Park Forum and high hopes for the upcoming AI Forum in South Korea, it seems likely that different models of internet regulation will lead to a patchwork quilt that businesses need to navigate.

The New York Times’s copyright lawsuit against Open AI is a reminder that AI models will be coming under pressure for their use of copyrighted content and similar pressures might well be seen around privacy and data. Countries will likely respond to these pressures differently, meaning that models, product rollout and investment could also differ by country. This is also the case at a sub national level. For example in the United States, with its seemingly permanently gridlocked federal government, states seem to be taking the lead in regulating AI and other elements of tech.

The discussion over open source and how it is regulated will become more pronounced

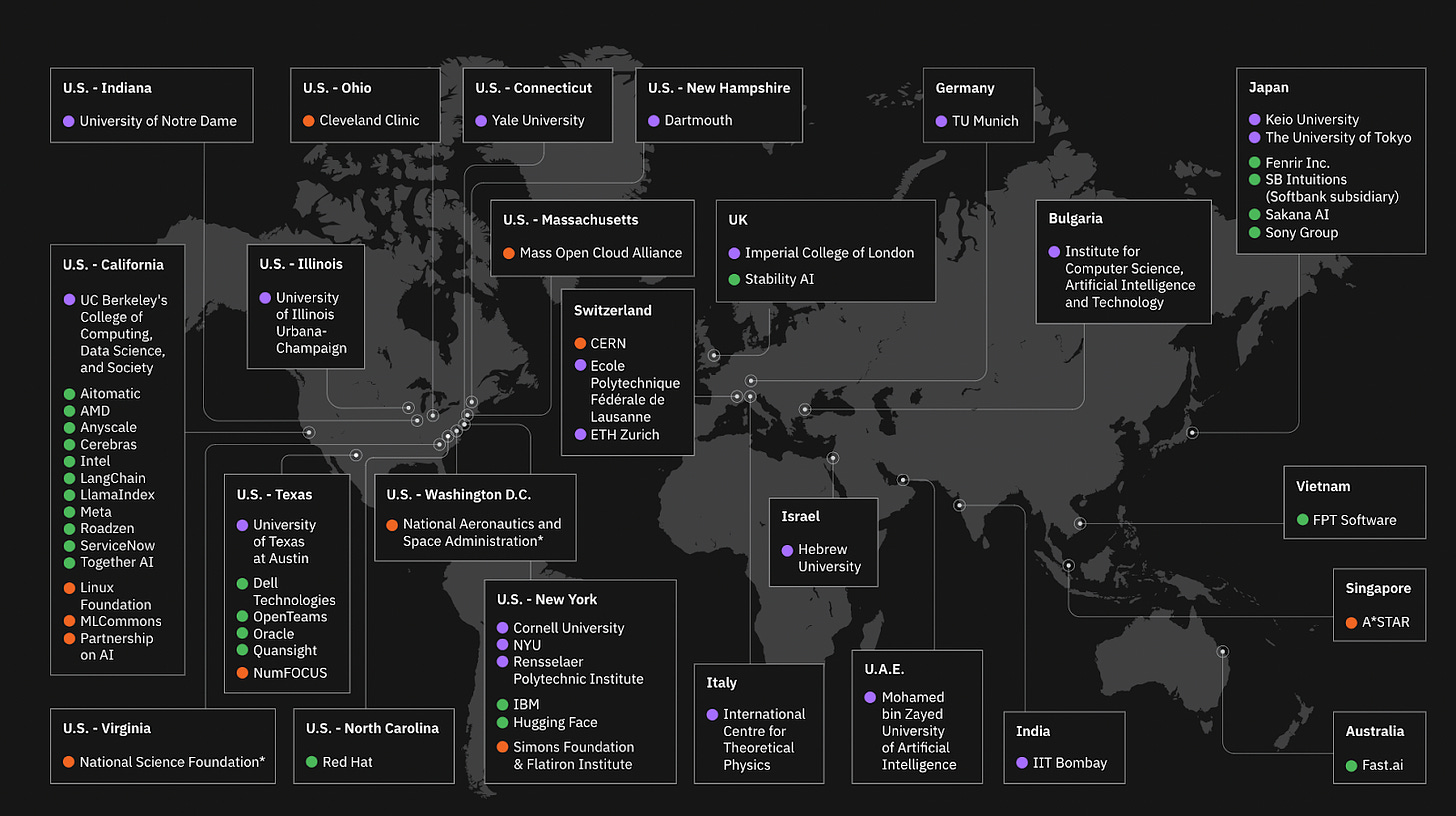

As we know, generative AI has made great strides in 2023 and so has open source AI. The chart below from the AI Alliance shows the scale of open source projects that are part of the alliance of open source providers.

Andreesseen Horowitz’s rationale for investing in France’s Mistral was also an interesting read. Key line:

Mistral is at the center of a small but passionate developer community growing up around open source AI. These developers generally don’t train new models from scratch, but they can do just about everything else: run, test, benchmark, fine tune, quantize, optimize, red team, and otherwise improve the top open-source LLMs. Community fine-tuned models now routinely dominate open source leaderboards (and even beat closed source models on some tasks).

Open source provides the potential for a greater variety of AI models to be developed and some of these models might lack the often self enforced safety rails imposed by closed models.

This will form part of the discussion of how open source models should be regulated. Part of the discussion over the EU’s AI Act involved the French pushing for open source models to be regulated more leniently. By contrast, the Biden Executive Order seemed to place an emphasis on closed models and the administration have expressed a desire to regulate open-source differently. This was a discussion picked up by the WEF recently. As more businesses start to use open-source and as differential open-source models emerge the debate over how open-source should be treated and regulated might well intensify.

Upskilling will become a prominent issue

Despite a series of sci-fi inspired dystopian warnings, a number of polls have shown that the biggest worry that most people have around AI is about how it will impact their jobs. As generative AI is utilised in more and more industries it seems likely that these pressures will only increase. It’s true that AI will boost productivity and allow people to focus more on the value adding tasks, but it’s also true that the half-life of a skill (the amount of time it takes for a newly acquired skill to half in value) is now as low as two and a half years for some skills, when it used to be measured in the decades. In this environment, the expectation that workers will upgrade their skills as their career goes on will increase, as will the expectation that managers will play a more active role in this. But this environment will also increase the pressure on tech companies and governments to further bulk up their support for reskilling measures, potentially in public-private partnership, or also in partnership with business groups and labour unions.

Under 16 screen time could be a major issue

It’s hard to predict with certainty what the nascent areas of tech regulation might be, but when you combine initial bills, a potentially high profile book launch and the instinct of many voters, it seems likely that regulators could be making a push around screen time for under 16s.

Jonathan Haidt’s Coddling of the American Mind became hugely impactful on the debate around universities and “wokeness”. His 2022 Atlantic essay arguing that the last ten years of US life had been “uniquely stupid” (largely through an emphasis on virality in social media) was influential within the tech community. Haidt is someone who can make a big splash and be quoted reverentially across the political spectrum. As such, it’s likely that his March book, The Anxious Generation will shape at least some of the emerging debate. The book’s subtitle reads “how the great rewiring of childhood is causing an epidemic of mental illness” and will seemingly make the case that children should have their screen time limited.

This is, in many ways, a continuation of controversies around the attention economy and a push for age-appropriate design, with a number of US states already legislating around teenage social media use and the UK government reportedly considering taking further measures. It seems clear that this will be an increasingly important debate as the year goes on.